This post has three purposes:

To summarize how the modern frenzy over college admissions got started;

To explain why “Best Colleges”-style ranking systems, pioneered by US News and applied elsewhere, were initially a response to that frenzy but eventually made it worse; and

To highlight some steps now underway to rein in the excesses—and some ways in which the college-admission process could become less destructive for all involved.

The gist of the argument is that for a generation-plus, colleges have been ranked largely on the advantages their students already have, when coming into the school. What their test scores were, how tough a selective-admissions process they survived, the range of experiences their family backgrounds had exposed them to. Plus the advantages a college itself has, starting with its endowment.

All these things have their place. But a far more useful standard of comparison is what students take from their years of education—at a four-year university, a community college, a technical institute, or wherever. How well does the experience equip them for economic life? What kinds of citizens and family members does it help them become? What opportunities does it create?

The good news is that more of these useful measures are emerging. My main purpose today is to direct attention to the most recent illustration. (And, a housekeeping note: the first few posts on this site have been long ones. Short dispatches are next in the queue.)

-Why now for this discussion? There’s never a bad time. But the beginning of a school year is when newly arrived college students are thinking about the places they’ve chosen. It’s also a time when high school students and their families are thinking about where they should apply.

-Why me for this discussion? I’ve been involved in this question for quite a while. For two years I was near the center of it, as the editor of US News when its rankings were at their peak of influence.

If you’d like to skip the buildup and go straight to the suggestions, they’re down in section 3. I have a “further reading” list at the end.

Here we go:

1) How it happened: college admission as status marker and ‘positional good’

The coarseness of the recent “Varsity Blues” college admissions scandal was shocking—the huge payoffs, the flat-out fraud. But the ambitions and desperation it revealed can’t have come as a surprise.

“Of course” Hollywood stars and big financiers would pay to move their kids ahead in the college-admissions queue. It’s one more thing that money can buy.

Even parents who aren’t rich or famous know the riff: You worry about getting your kids into the right nursery school, so they can get into the right elementary school, so they can get into the right high school, and so on.

But the news-behind-the-news is that educated-class American life had not always been distorted in this way. Only since World War II, and in earnest only since the 1970s, has elite college admission been converted into one more fought-over marker of privilege and status. A “positional good,” as the economists would say: something I have, that puts me ahead of you.

Elite colleges have been around for a long time. Harvard was 140 years old by the time its alumnus John Adams signed the Declaration of Independence. All but one of today’s other Ivies also were up and running long before then. (The “new” one is Cornell, founded in 1865.)

But until the mid-1900s, “selective” college admission had been a relatively limited, regional, family-based, prep-school-and-blue-blood phenomenon. As late as 1940, less than 10% of the U.S. population had been to college at all, and less than 5% held a four-year degree. (The proportion with a four-year degree is now around 38% and rising.)

Even when college admissions expanded rapidly after World War II, thanks to the GI Bill and other changes, getting into college was not that huge an ordeal. Through the early Baby Boomer era, college attendance patterns were still largely regional. Northeastern prep and boarding schools sent flotillas of grads to the elite Northeastern universities. People from the west coast were much more likely (than now) to stay in the West; people from the South, in the South, and so on.

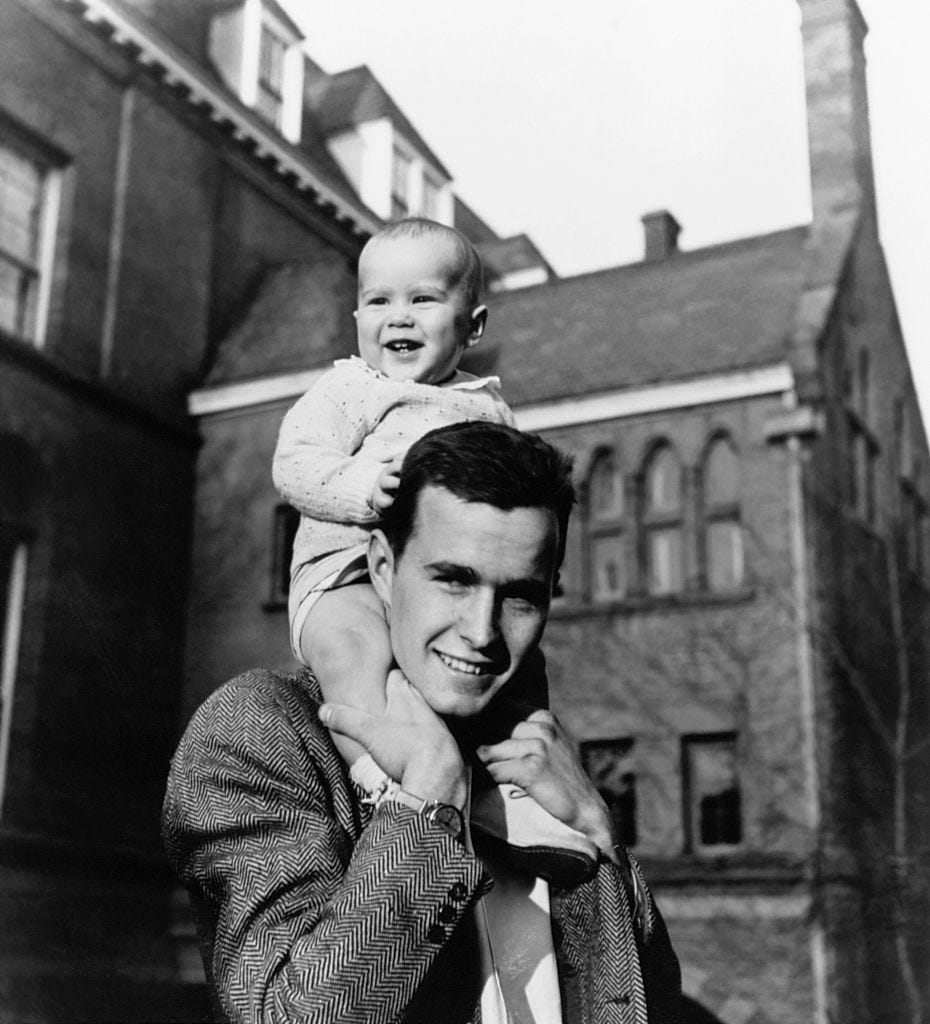

George W. Bush was admitted to Yale, after modest academic achievements at Andover, in 1964. As it happened, the Yale of that era was one of many elite schools just beginning a push to “nationalize” their student body. Fewer people from prep schools and the Northeast; more from other backgrounds (and races) and other parts of the country and world. For most of the Ivies, this also meant admitting women for the first time.

A standard Old Grad line at any elite school is, “They’d never let in someone like me these days.” In his Old Grad mode George W. Bush used to say that about Yale. It’s less a reflection on him than on the larger changes in admissions that he was absolutely right.

Very obviously, admissions to elite schools still have a long way to go in their openness and democratization. Yet compared with their operations before the 1960s, they’ve become more diverse and representative of their nation and the world.

But—and this is the but point—broader, more democratic admissions have become much more competitive admissions. More people, with more money, from more places, are contending for a relatively fixed number of slots at “famous” schools.

The pressure for name-brand admission is crazy in the big-picture sense, since there’s a hazy-at-best connection between the best-known schools, and what is best for any specific student. Check all those Atlantic and Washington Monthly links below for chapter-and-verse.

It’s also crazy as a matter of simple math. The overwhelming majority of colleges and universities in America accept most students who apply.

But of course that’s like saying “There are plenty of houses in the United States.” There are still only so many in [Palo Alto / Aspen / the Upper East Side / name your site]. And unlike the competition for prime-location real estate, elite colleges can’t simply let prices rise to reflect demand. (Indirectly, though…)

Thus the tiny handful of American institutions that have become name brands around the world have been under ever-growing global applicant pressure. For instance: just as the current strongman nationalist leader of China, Xi Jinping, was solidifying his control in Beijing, his daughter was in Cambridge, Mass., studying for her Harvard undergraduate degree. (See Evan Osnos’s story on her.)

The resulting situation is distorting for those schools; it’s insanely pressurized for students; and it serves no clear educational or public goal.

Which brings us to: ratings and rankings.

2) How things got worse: you get more of what you measure.

Any bureaucrat or business person knows the maxim: what gets measured, gets done. What has gotten publicly measured about U.S. higher education has often been destructive and led to vicious cycles. This inevitably involves the rankings featured by US News.

As admissions pressure and anxiety crept up in the 1980s, the leaders of US News had a brilliant journalistic-business insight. You can read the back story here, from Ralph Frammolino, in 1994 in the LA Times.

The essence is that the magazine realized it could satisfy a growing national and world demand for guidance about higher education. A family in Oregon—or Taiwan—might be interested in small colleges in the Midwest, where they’d never been. How could they learn more? The US News “Best Colleges” guidebooks were a start.

The problem came from an additional journalistic-marketing insight: People are suckers for lists. The AP has its week-by-week polls on the best football teams. Why not do the same for colleges? The result was a preposterous-on-its-face attempt to “rank” institutions that were set up for entirely different purposes.

Is CalTech “better” than Amherst? And which of them is “better” than the University of Chicago? Or West Point? In the real world, the answer is: it depends on what you’re looking for. But in ranking world, one or the other would be “better” than the others, and every year they could move up or down the charts.

Some people in education resisted these rankings for huffiness-and-guild reasons. Who are these outsiders to judge what we do here, inside our ivied walls? (Think: the Bob Balaban character in the recent Netflix series The Chair.)

But more and more of them made the undeniable complaint that applicants, alumni, funders, and others would actually take these rankings seriously—with bad effects. Sure enough, that occurred. Applications rose and fell, and so did donations, depending on whether a school was doing “better” or “worse” in the rankings. University leaders knew they’d be judged as successes or failures, based on whether the school moved up or down three places. Some colleges got better or worse bond ratings, depending on these results.

I won’t go into some of the most egregious specific distortions. Overall, rankings drove destructive behavior by colleges, which drove destructive behavior from students and families, which drove more of the same all around.

The fundamental point is: The most popular rankings were heavily based on inputs. They emphasized advantages the institutions already had (endowment, student-faculty ratio, etc), or advantages the students brought to the school (test scores, etc). They measured privileges, rather than providing any guide to opportunities.

But these rankings were not going away. I know this first-hand, from having dealt with them in two years at the magazine. That is a story for another time.

3. How things could get better: measuring ‘output’ rather than ‘input’.

“No rankings” is not an option. They’re built into today’s media. But “more rankings” is a step, to dilute the power of any one measurement system. And “better rankings” is obviously a goal.

This is where The Washington Monthly has been a pioneer. (For the record: my very first magazine job was at the Monthly, in the 1970s, and I’m a friend of its leaders and staff members.)

Starting in 2005, the magazine has provided rankings based largely on results of students’ experiences at given colleges, rather than what the students were like when they showed up. As the latest edition of its college rankings, published just this week, plainly says:

We rate schools based on what they do for the country. It’s our answer to U.S. News & World Report, which relies on crude and easily manipulated measures of wealth, exclusivity, and prestige.

And in his editor’s note, Paul Glastris says that the familiar US News-style ranking…

both reflects and aggravates the higher education sector’s increasing tendency to shower resources on students from affluent backgrounds while sending a trickle to those from poor, working-class, and minority families…

The Monthly’s rankings, by contrast, are crafted to push institutions of higher learning to be engines of upward mobility, scientific progress, and democratic participation.

What does this different ranking system mean in specific? The Monthly’s criteria have evolved over the years but still have emphasized “output rather than input” —the difference that colleges make, for their students, their communities, the nation, and the world. You can examine them in detail at their site.

In our travels, my wife, Deb and I have repeatedly found that the crucial American education institutions of the moment are not the ones that dominate the “best colleges” list. They’re community colleges; “career-technical” academies; land-grant university; and others in addition to the crown-jewel research institutions and liberal arts colleges that still distinguish American education.

Higher education is at its best when it creates tomorrow’s opportunities. It is at it worst when it reinforces today’s inequalities. More tools are now at hand to measure, publicize, and thus encourage more of the opportunity-expansion education can provide. Check them out.

For more

For reference, there’s more detail on nearly everything discussed here in these previous pieces:

-Three Atlantic cover stories: “The Case Against Credentialism,” by me in 1985; “The Great Sorting,” by Nicholas Lemann in 1995; and “The Early Decision Racket,” by me in 2001.

-Many stories from The Washington Monthly, starting with “Broken Ranks,” by Amy Graham and Nicholas Thompson, in 2001 and leading through the new September/October 2021 issue of the Monthly, and “A Different Kind of College Rankings,” by Paul Glastris. For the record, Glastris is the long-time editor of the Washington Monthly, who has driven its innovative approach to college ranking; Amy Graham had been director of data research at US News before writing the story with Nicholas Thompson; and Thompson, then a writer and editor at the Monthly, is now The Atlantic’s CEO. He also wrote this story about rankings in 2003 for the New York Times.

I have been partial to Colleges that Change Lives. I was well past my undergraduate/graduate school days when it was first published. However, I still refer many young people to the list. One of mine went to Evergreen, for example, which was an excellent choice given their strengths. Unfortunately, experiencing the implosion of Brett Weinstein was not a lesson I expected.

https://ctcl.org/

This post makes excellent points on how to satisfy Americans' desires for ranking universities in ways that don't cater to the baser instincts for positional goods. I'd like to add a modest proposal for a more minor change: please don't update these lists annually.

Most universities and colleges are large institutions which, like all big organizations, have lots of inertia. By all rights, rankings should change slowly and ponderously. But all too often that's not what we see. In the US News rankings, we see MIT go from #7 to #3 between 2017 and 2018, and University of Chicago go from # 3 to #6 between 2019 and 2020. This is ridiculous, of course, but without some amount of variation, no one would buy the US News product (or any other ranking organization's output).

Still, don't just do it. Maybe rank universities every five years. But simply not every year.